Oral

Prostate

ISMRM & SMRT Annual Meeting • 15-20 May 2021

| Concurrent 5 | 16:00 - 18:00 | Moderators: Francesco Giganti & Susan Noworolski |

|

0813. |

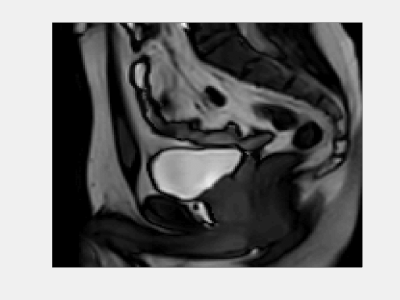

CycleSeg: MR-to-CT Synthesis and Segmentation Network for Prostate Radiotherapy Treatment Planning

Huan Minh Luu1, Gyu-sang Yoo2, Dong-Hyun Kim1, Won Park2, and Sung-Hong Park1

1Magnetic Resonance Imaging Laboratory, Department of Bio and Brain Engineering, Korea Advanced Institute of Science and Technology, Daejeon, Korea, Republic of, 2Department of Radiation Oncology, Samsung Medical Center, Seoul, Korea, Republic of

MR-only radiotherapy planning can reduce the radiation exposure from repeated CT scanning. Most researches focus on generating synthetic CT images from MR, but not the contouring of organs-of-interest in said images, which requires manual labor and expertise. In this study, we proposed CycleSeg, a CycleGAN-based network that can accomplish both tasks to streamline the process and reduce human efforts. Experiments showed that the proposed CycleSeg can generate realistic synthetic CT images along with accurate organ segmentation in the pelvis of patients with prostate cancer.

|

|

|

0814. |

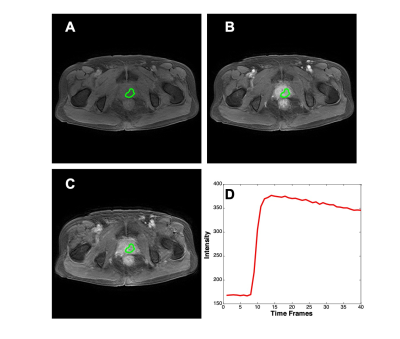

Differential Diagnosis of Prostate Cancer and Benign Prostatic Hyperplasia Based on Prostate DCE-MRI by Using Deep Learning with Different Peritumoral Areas

Yang Zhang1,2, Weikang Li3, Zhao Zhang3, Yingnan Xue3, Yan-Lin Liu2, Peter Chang2, Daniel Chow2, Ke Nie1, Min-Ying Su2, and Qiong Ye3,4

1Department of Radiation Oncology, Rutgers-Cancer Institute of New Jersey, Robert Wood Johnson Medical School, New Brunswick, NJ, United States, 2Department of Radiological Sciences, University of California, Irvine, CA, United States, 3Department of Radiology, The First Affiliate Hospital of Wenzhou Medical University, Wenzhou, China, 4High Magnetic Field Laboratory, Hefei Institutes of Physical Science, Chinese Academy of Sciences, Hefei, China

A bi-directional Convolutional Long Short Term Memory (CLSTM) Network was previously shown capable of differentiating prostate cancer and benign prostate hyperplasia (BPH) based DCE-MRI that acquired 40 time frame images. The purpose of this work was to investigate the diagnostic value of peritumoral tissues. Several different methods were used to expand peritumoral tissues surrounding the lesion, and they were used as the input to the diagnostic network. A total of 135 cases were analyzed, including 73 prostate cancer and 62 BPH. Based on 4-fold cross-validation, the region growing based ROI had the best performance, with a mean AUC of 0.89.

|

|

|

0815. |

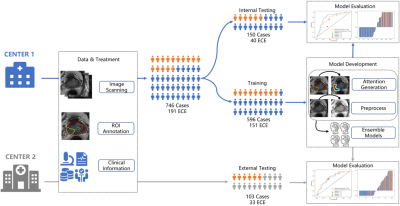

A Prior-Knowledge Embedded Convolutional Neural Network for Extracapsular Extension of the Prostate Cancer at Multi-Parametric MRI

Yihong Zhang1, Ying Hou2, Jie Bao3, Yang Song1, Yu-dong Zhang2, Xu Yan4, and Guang Yang1

1East China Normal University, Shanghai, China, 2the First Affiliated Hospital with Nanjing Medical University, Nanjing, China, 3the First Affiliated Hospital with Soochow University, Soochow, China, 4Siemens Healthcare, Shanghai, China

We proposed an algorithm to incorporate radiologist’s prior-knowledge about location of extension into a CNN model to diagnose the extracapsular extension of the prostate cancer from multiparametric MRI (mpMRI). The model was trained on 596 cases with ensemble learning before validated with an independent validation cohort of 150 cases and an external cohort of 103 cases. Our proposed model achieved an area under receiver operating characteristic curve (AUC) of 0.807/0.728 on the internal/external test cohort, which is better than the traditional model (AUC=0.746/0.723) and the clinical reports by two radiologists (AUC=0.725, 0.632/0.694, 0.712).

|

|

|

0816. |

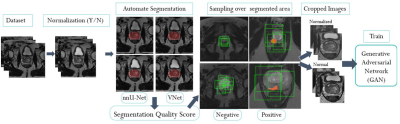

Prostate Cancer Detection on T2-weighted MR images with Generative Adversarial Networks

Alexandros Patsanis1, Mohammed R. S. Sunoqrot 1, Elise Sandsmark 2, Sverre Langørgen 2, Helena Bertilsson 3,4, Kirsten M. Selnæs 1,2, Hao Wang5, Tone F. Bathen 1,2, and Mattijs Elschot 1,2

1Department of Circulation and Medical Imaging, Norwegian University of Science and Technology - NTNU, Trondheim, Norway, 2Department of Radiology and Nuclear Medicine, St. Olavs Hospital, Trondheim University Hospital, Trondheim, Norway, 3Department of Clinical and Molecular Medicine, Norwegian University of Science and Technology - NTNU, Trondheim, Norway, 4Department of Urology, St. Olavs Hospital, Trondheim University Hospital, Trondheim, Norway, 5Department of Computer Science, Norwegian University of Science and Technology - NTNU, Gjøvik, Norway

Generative Adversarial Networks (GANs) were evaluated for detection and visualization of prostate cancer, proposing an automated end-to-end pipeline. Two GANs were trained and tested with T2-weighted images from an in-house dataset of 646 patients. The weakly-supervised GAN performed better (AUC=0.785) than unsupervised GAN (AUC=0.462). The performance of the GANs was dependent on pre-processing parameters. The PROSTATEx dataset (N=204) was used for external validation, giving an AUC of 0.642. The weakly-supervised GAN showed promise for detecting and localizing prostate cancer on T2W MRI, but further research is necessary to improve model performance and generalizability.

|

|

0817. |

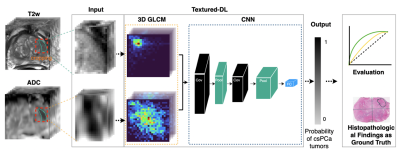

Texture-Based Deep Learning for Prostate Cancer Classification with Multiparametric MRI

Yongkai Liu1,2, Haoxin Zheng1, Zhengrong Liang3, Miao Qi1, Wayne Brisbane4, Leonard Marks4, Steven Raman1, Robert Reiter4, Guang Yang5, and Kyunghyun Sung1

1Department of Radiological Sciences, University of California, Los Angeles, Los Angeles, CA, United States, 2Physics and Biology in Medicine IDP, University of California, Los Angeles, Los Angeles, CA, United States, 3Departments of Radiology and Biomedical Engineering, Stony Brook University, Stony Brook, New York, NY, United States, 4Department of Urology, University of California, Los Angeles, Los Angeles, CA, United States, 5National Heart and Lung Institute, Imperial College London, London, United Kingdom

Accurate classification of prostate cancer (PCa) enables better prognosis and selection of treatment plans. We presented a textured-based deep learning method to enhance prostate cancer classification performance by enriching deep learning with prostate cancer texture information.

|

||

|

0818. |

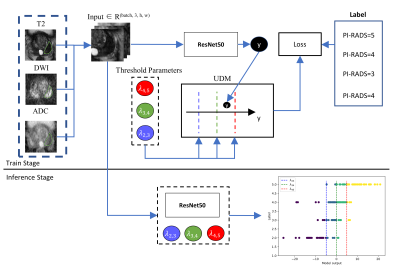

Incorporating UDM into Deep Learning for better PI-RADS v2 Assessment from Multi-parametric MRI

Ruiqi Yu1, Ying Hou2, Yang Song1, Yu-dong Zhang2, and Guang Yang1

1Shanghai Key Laboratory of Magnetic Resonance, East China Normal University, Shanghai, China, 2Department of Radiology, the First Affiliated Hospital with Nanjing Medical University, Jiangsu, China

Prostate cancer is one of the most important causes of cancer-incurred deaths among males. The prostate imaging reporting and data system (PI-RADS) v2 standardizes the acquisition of multi-parametric magnetic resonance images (mp-MRI) and identification of clinically significant prostate cancer. We purposed a convolutional neural network which integrated an unsure data model (UDM) to predict the PI-RADS v2 score from mp-MRI. The model achieved an F1 score of 0.640, which is higher than that of the ResNet-50. On an independent test cohort of 146 cases, our model achieved an accuracy of 64.4%.

|

|

0819. |

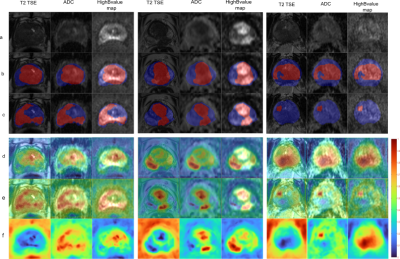

Explainable AI for CNN-based Prostate Tumor Segmentation in Multi-parametric MRI Correlated to Whole Mount Histopathology

Deepa Darshini Gunashekar1, Lars Bielak1,2, Arnie Berlin3, Leonard Hägele1, Benedict Oerther4, Matthias Benndorf4, Anca Grosu2,4, Constantinos Zamboglou2,4, and Michael Bock1,2

1Dept.of Radiology, Medical Physics, Medical Center University of Freiburg, Faculty of Medicine, University of Freiburg, Freiburg, Germany, 2German Cancer Consortium (DKTK), Partner Site Freiburg, Freiburg, Germany, 3The MathWorks, Inc., Novi, MI, United States, 4Dept.of Radiology, Medical Center University of Freiburg, Faculty of Medicine, University of Freiburg, Freiburg, Germany

An explainable deep learning model was implemented to interpret the predictions of a convolution neural network (CNN) for prostate tumor segmentation. The CNN automatically segments the prostate gland and prostate tumors in multi-parametric MRI data using co-registered whole mount histopathology images as ground truth. For the interpretation of the CNN, saliency maps are generated by generalizing the Gradient Weighted Class Activation Maps method for prostate tumor segmentation. Evaluations on the saliency method indicate that the CNN was able to correctly localize the tumor and the prostate by targeting the pixels in the image deemed important for the CNN's prediction.

|

||

0820. |

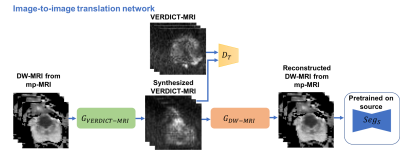

Prostate Lesion Segmentation on VERDICT-MRI Driven by Unsupervised Domain Adaptation

Eleni Chiou1,2, Francesco Giganti3,4, Shonit Punwani5, Iasonas Kokkinos2, and Eleftheria Panagiotaki1,2

1Centre of Medical Image Computing, University College London, London, United Kingdom, 2Department of Computer Science, University College London, London, United Kingdom, 3Department of Radiology, UCLH NHS Foundation Trust, University College London, London, United Kingdom, 4Division of Surgery & Interventional Science, University College London, London, United Kingdom, 5Centre for Medical Imaging, Division of Medicine, University College London, London, United Kingdom

In this work we utilize unsupervised domain adaptation for prostate lesion segmentation on VERDICT-MRI. Specifically, we use an image-to-image translation method to translate multiparametric-MRI data to the style of VERDICT-MRI. Given a successful translation we use the synthesized data to train a model for lesion segmentation on VERDICT-MRI. Our results show that this approach performs well on VERDICT-MRI despite the fact that it does not exploit any manual annotations.

|

||

|

0821. |

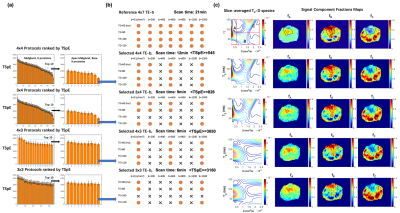

Accelerated Diffusion-Relaxation Correlation Spectrum Imaging (DR-CSI) for Ex Vivo and In Vivo Prostate Microstructure Mapping

Zhaohuan Zhang1, Sohrab Afshari Mirak1, Melina Hosseiny1, Afshin Azadikhah1, Amirhossein Mohammadian Bajgiran1, Alan Priester2, Kyunghyun Sung1, Anthony Sisk3, Robert Reiter2, Steven Raman1, Dieter Enzmann1, and Holden Wu1

1Department of Radiological Sciences, UCLA, Los Angeles, CA, United States, 2Department of Urology, UCLA, Los Angeles, CA, United States, 3Department of Pathology, UCLA, Los Angeles, CA, United States

Diffusion-relaxation correlation spectrum imaging (DR-CSI) can map prostate microstructure in both ex vivo and in vivo imaging. However, the translation of prostate DR-CSI to in vivo applications faces technical challenges regarding trade-offs between scan time and accuracy. The goal of this study was to develop a data-driven systematic framework to evaluate and select subsampled DR-CSI echo time and b-values encoding schemes that reduce scan time while maintaining accuracy of estimated prostate microstructure parameters.

|

|

|

0822. |

Characterization of motion-induced phase errors in prostate DWI

Sean McTavish1, Anh T. Van2, Kilian Weiss3, Johannes M. Peeters4, Marcus R. Makowski2, Rickmer F. Braren2, and Dimitrios C. Karampinos2

1Technical University of Munich, Munich, Germany, 2Department of Diagnostic and Interventional Radiology, Technical University of Munich, Munich, Germany, 3Philips Healthcare, Hamburg, Germany, 4Philips Healthcare, Best, Netherlands

In order to improve resolution without increasing geometric distortions, there is an ongoing interest in multi-shot DWI in the prostate due to the high clinical significance of prostate DWI for tumor staging and therapy monitoring. However, intershot phase variations require phase error estimation and correction to reconstruct the multi-shot DWI data. Since free-breathing scans are normally used in prostate DWI, respiratory motion can be a significant source of phase errors in the prostate. The present work aims to characterize and investigate the link between respiratory motion and phase errors in the prostate.

|

The International Society for Magnetic Resonance in Medicine is accredited by the Accreditation Council for Continuing Medical Education to provide continuing medical education for physicians.